Energy – AI Energy Solutions

LSTM vs Transformer: Next-Day Energy Forecasting on Smart Grid

Fictional

|

03/01/2026 > 21/01/2026

Context

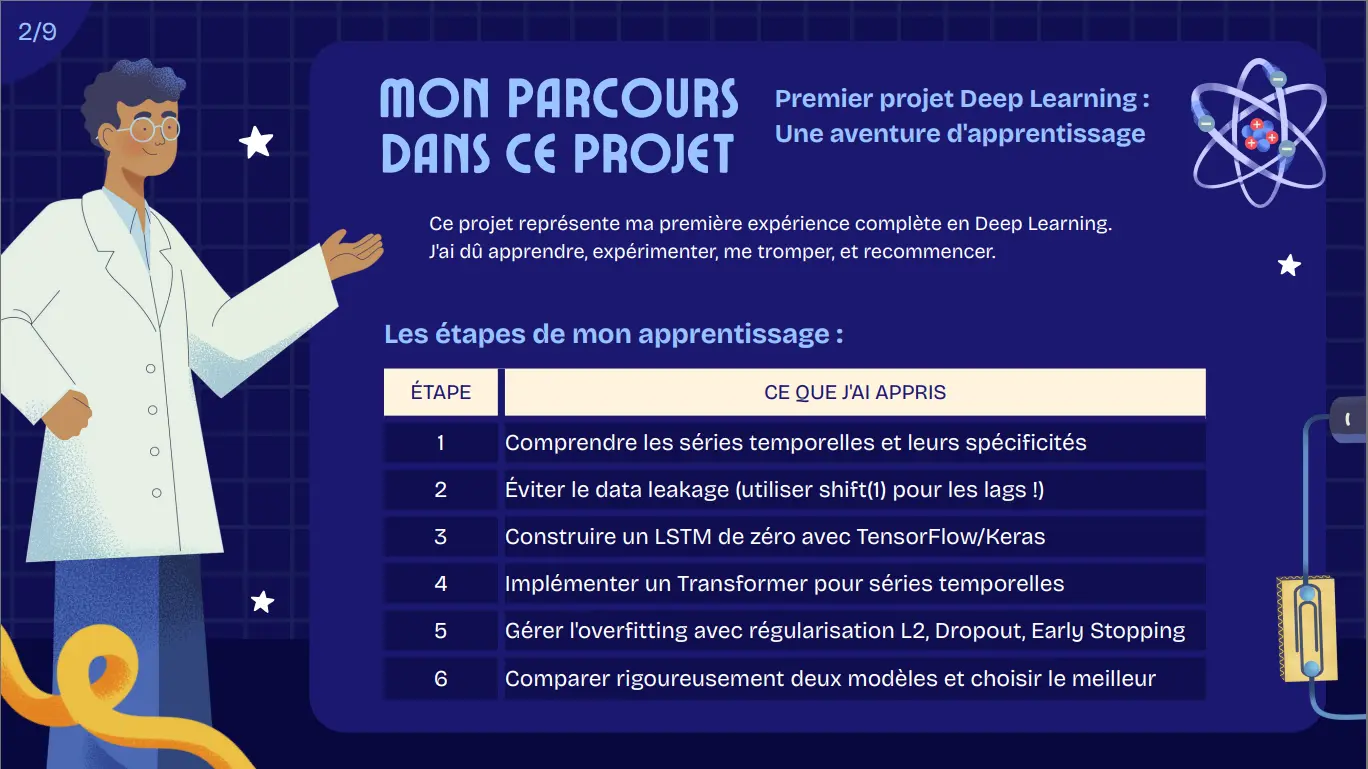

I was commissioned by AI Energy Solutions (fictional), a smart grid company managing 50,000 households in Île-de-France, to develop a deep learning forecasting system for daily energy purchasing on the EPEX spot market.

My role:

- Compare LSTM and Transformer architectures for 24-hour consumption prediction

- Engineer features combining historical patterns, weather data, and calendar events

- Achieve forecasting accuracy below 0.5 kW MAE to reduce annual losses (€62.5M from over/under-provisioning)

Datasets

UCI Individual Household Electric Power Consumption: real data from a household in Sceaux (Paris suburb), December 2006 to November 2010

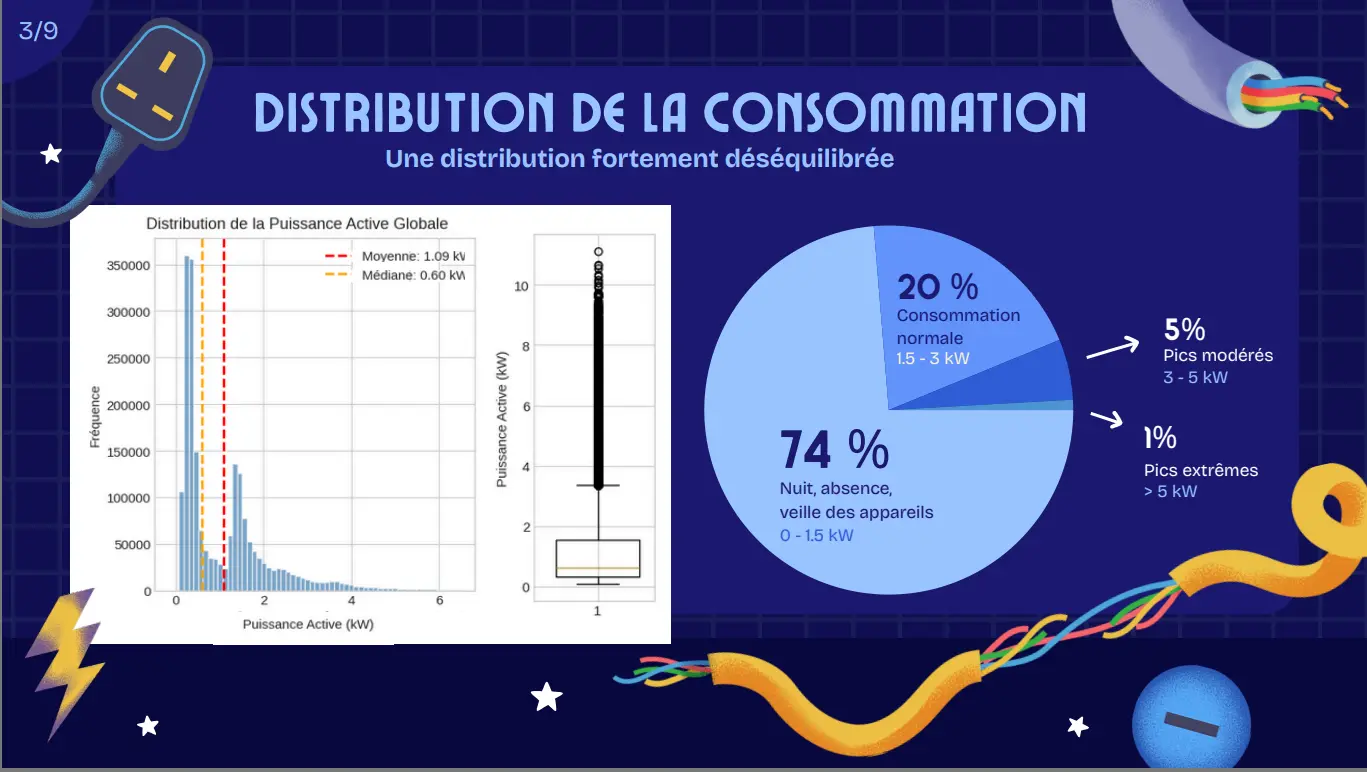

2,049,280 minute-level observations → 34,589 hourly after aggregation

7 variables: global active/reactive power, voltage, intensity, 3 sub-meters

Target: Global Active Power (kW)

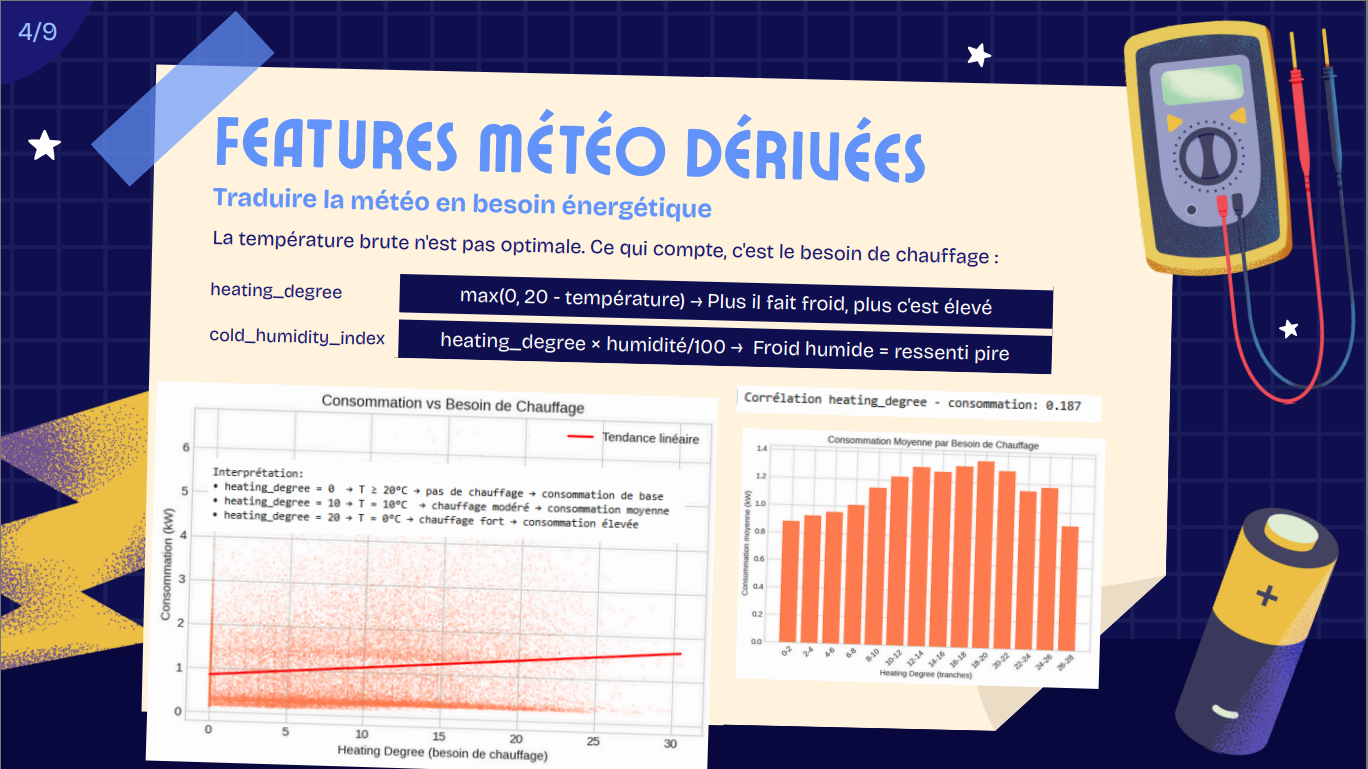

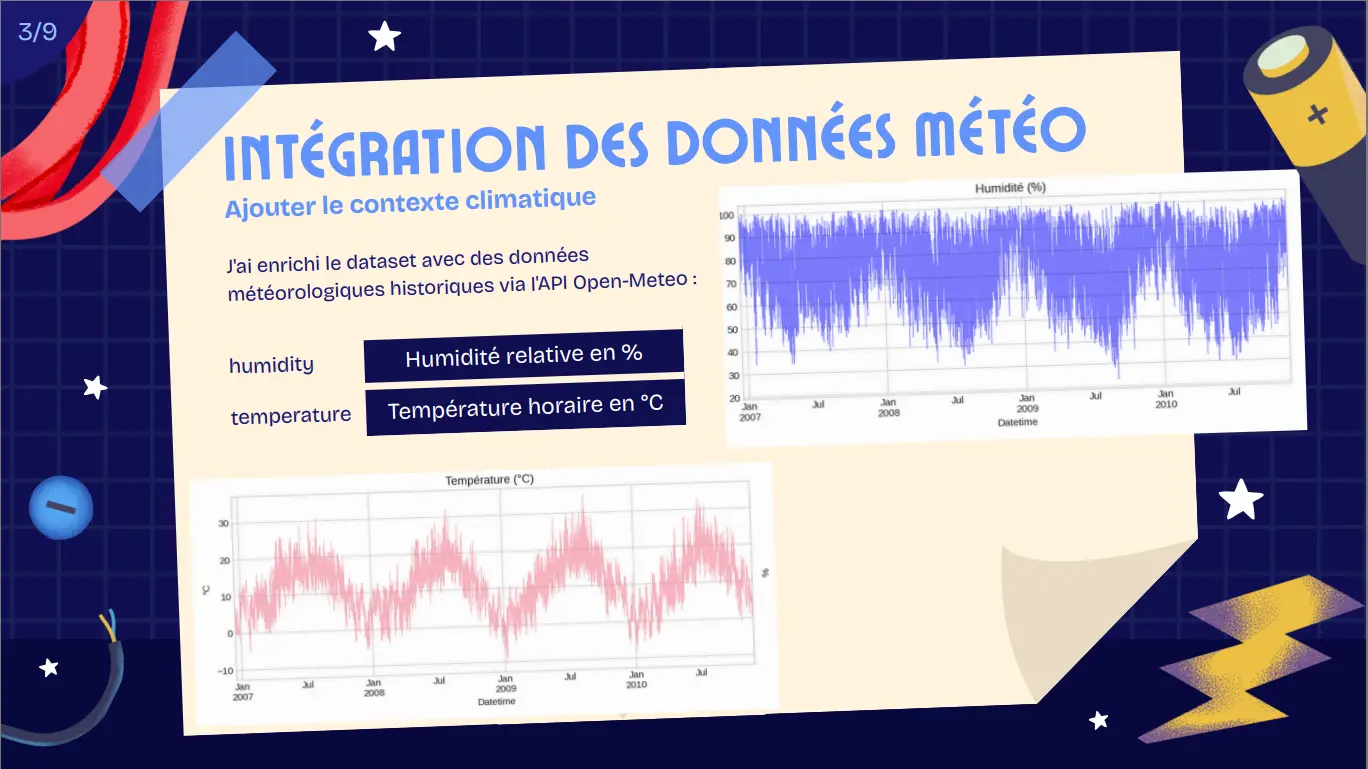

External weather data: Open-Meteo API (Sceaux) → Heating Degree, Humidity, Cold Humidity Index

Workflow

Environment: Google Colab (Python 3), TensorFlow/Keras, pandas, scikit-learn, GPU T4

Data preparation:

- Hourly resampling, 1.25% missing values removed

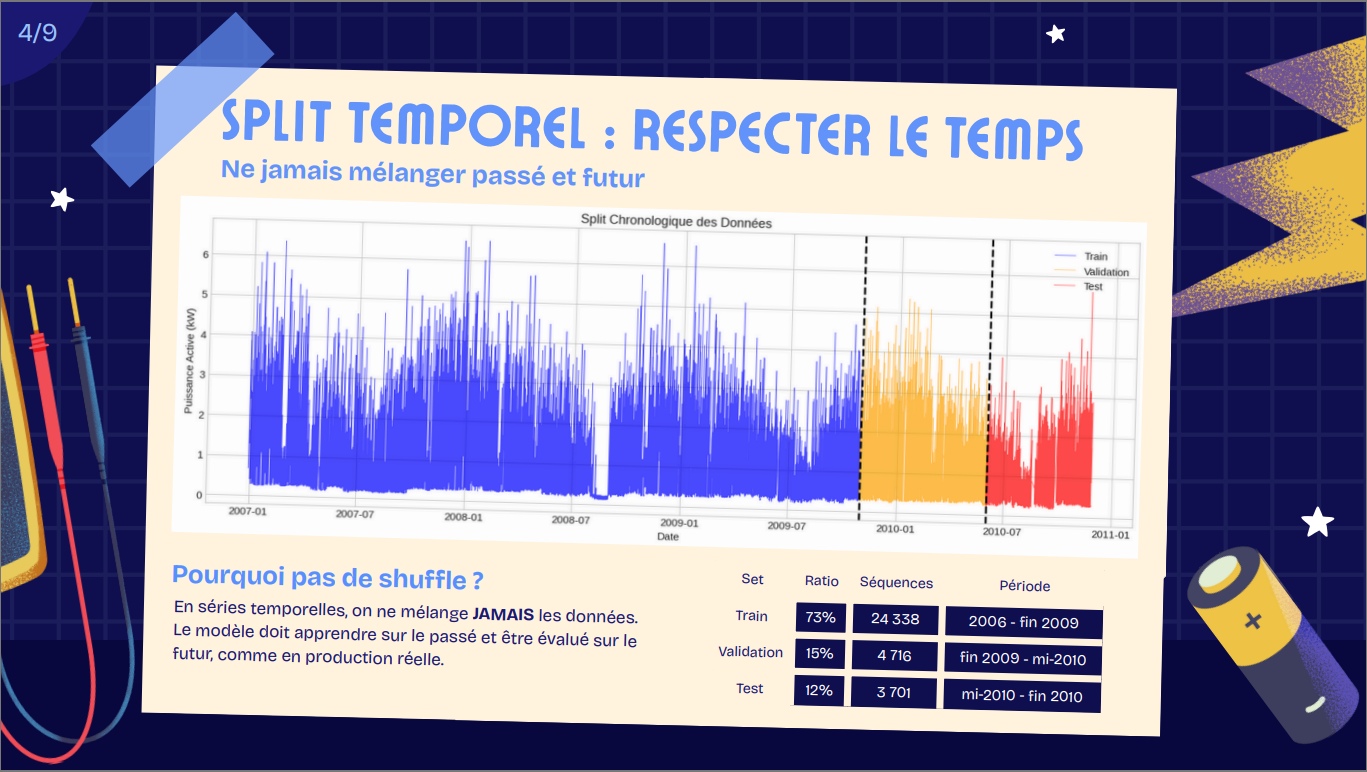

- Temporal split: Train 73% (2006-2009) / Val 15% / Test 12% (2010)

- Sliding window: 336h input (2 weeks) → 24h output

- MinMaxScaler normalization (fit on train only)

Feature engineering (34 features):

- Lag features: 1h, 2h, 3h, 6h, 12h, 24h, 48h, 168h, 336h

- Rolling stats: mean (6h, 12h, 24h, 168h, 336h), std/min/max (24h)

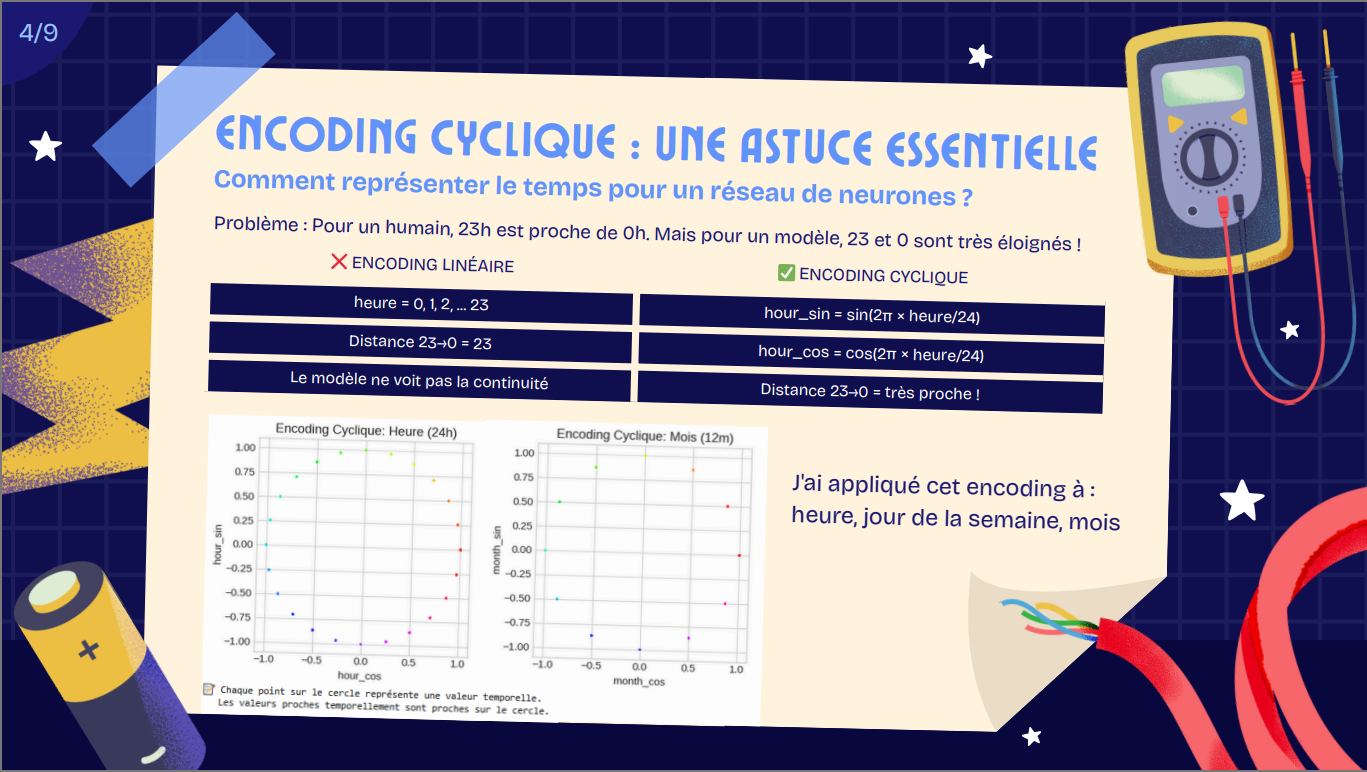

- Cyclical encoding: hour, day of week, month (sin/cos)

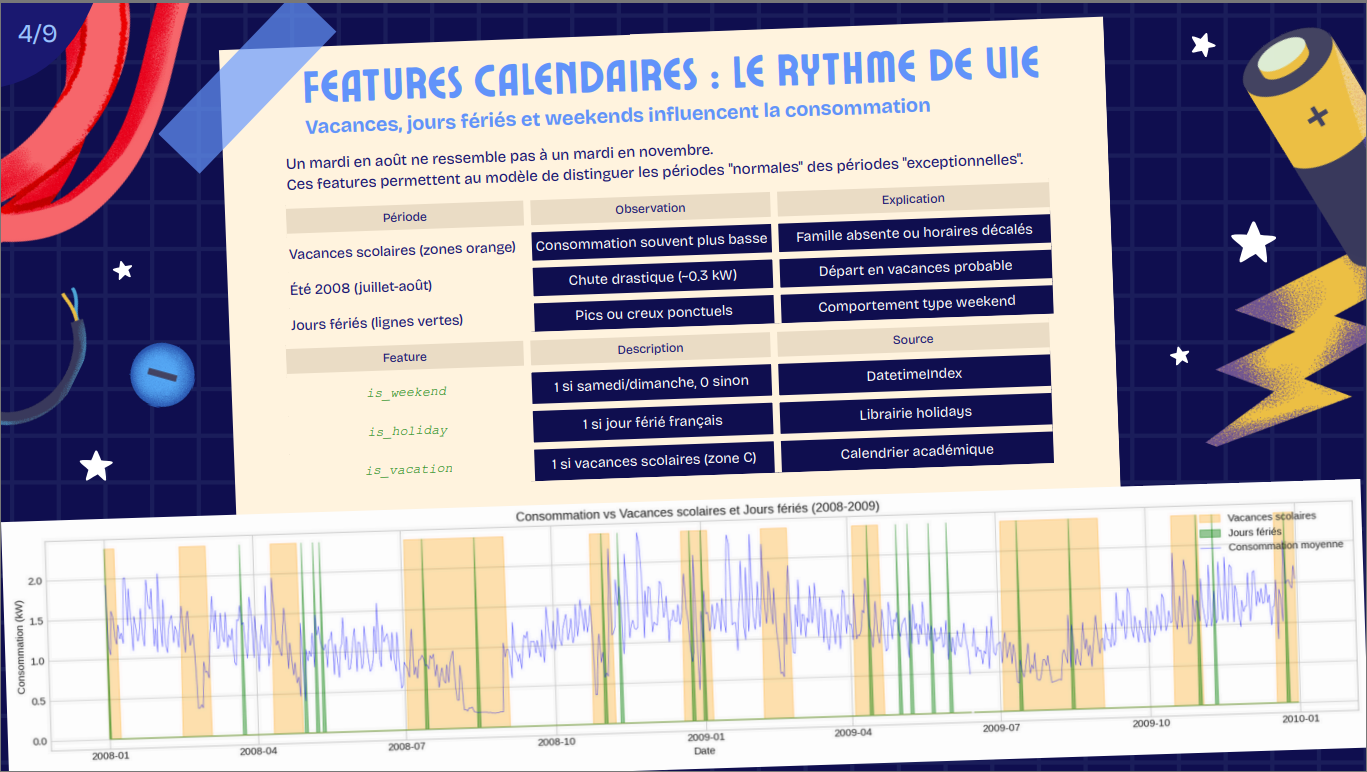

- Calendar: is_weekend, is_holiday, is_vacation

- Weather: heating_degree (base 20°C), humidity, cold_humidity_index

Model development:

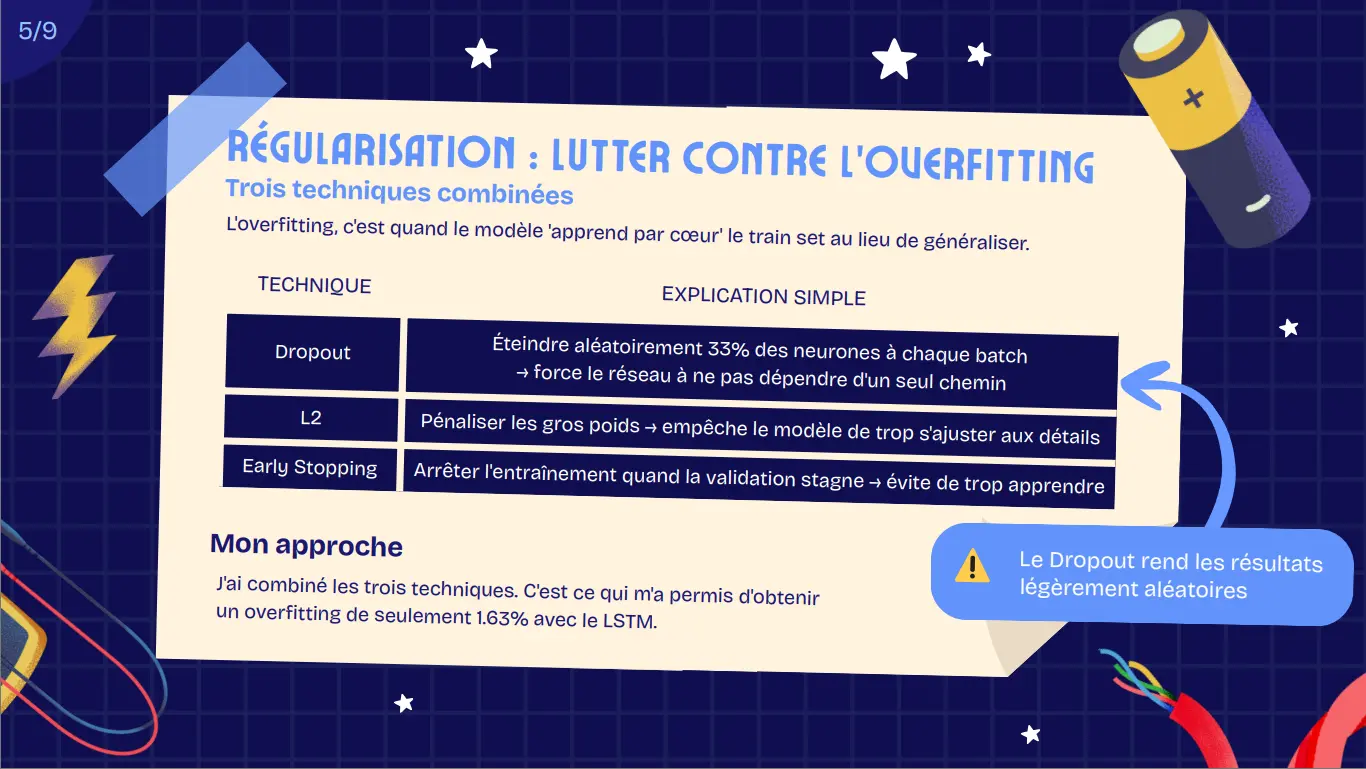

- LSTM: 2 layers (64, 32 units), dropout 0.33, early stopping

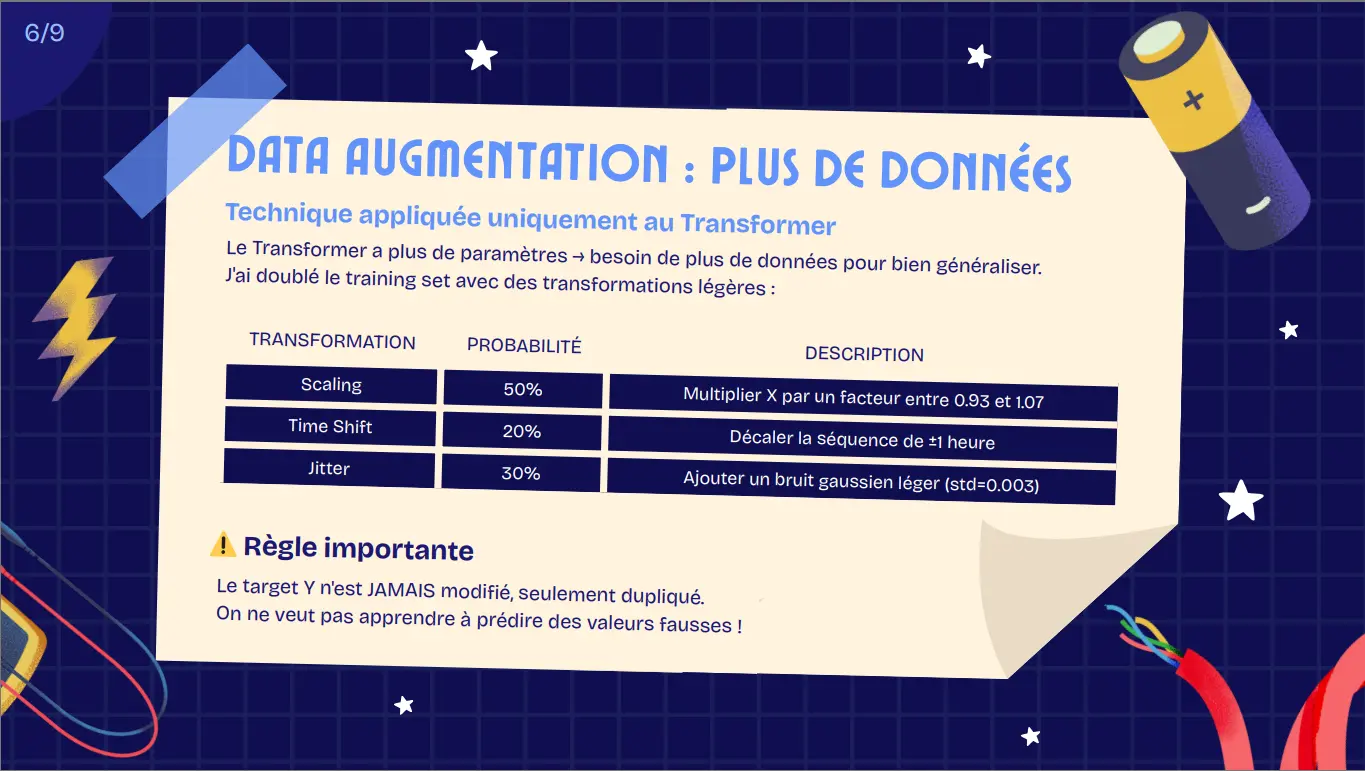

- Transformer: 2 encoder layers, 1 positional encoding, 4 heads, d_model=64, L2 reg, dropout 0.35

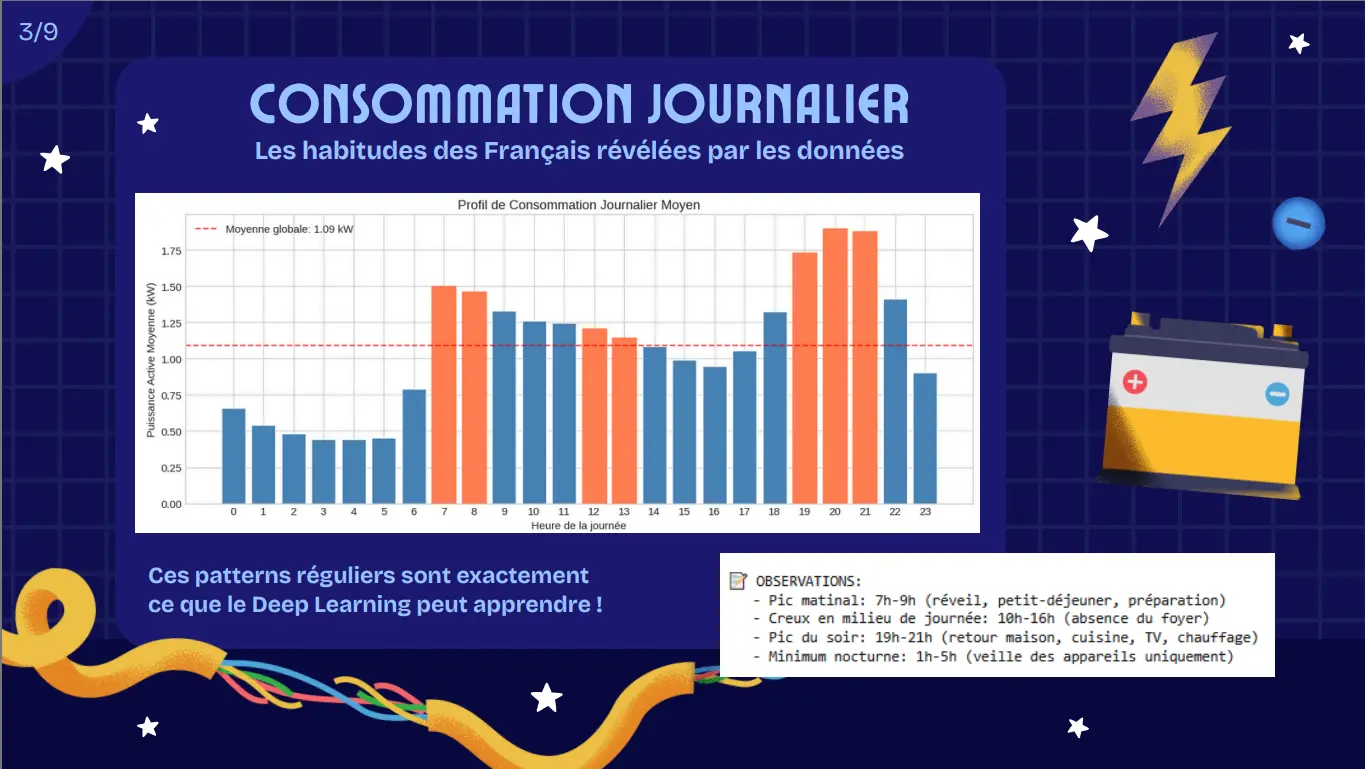

Insights

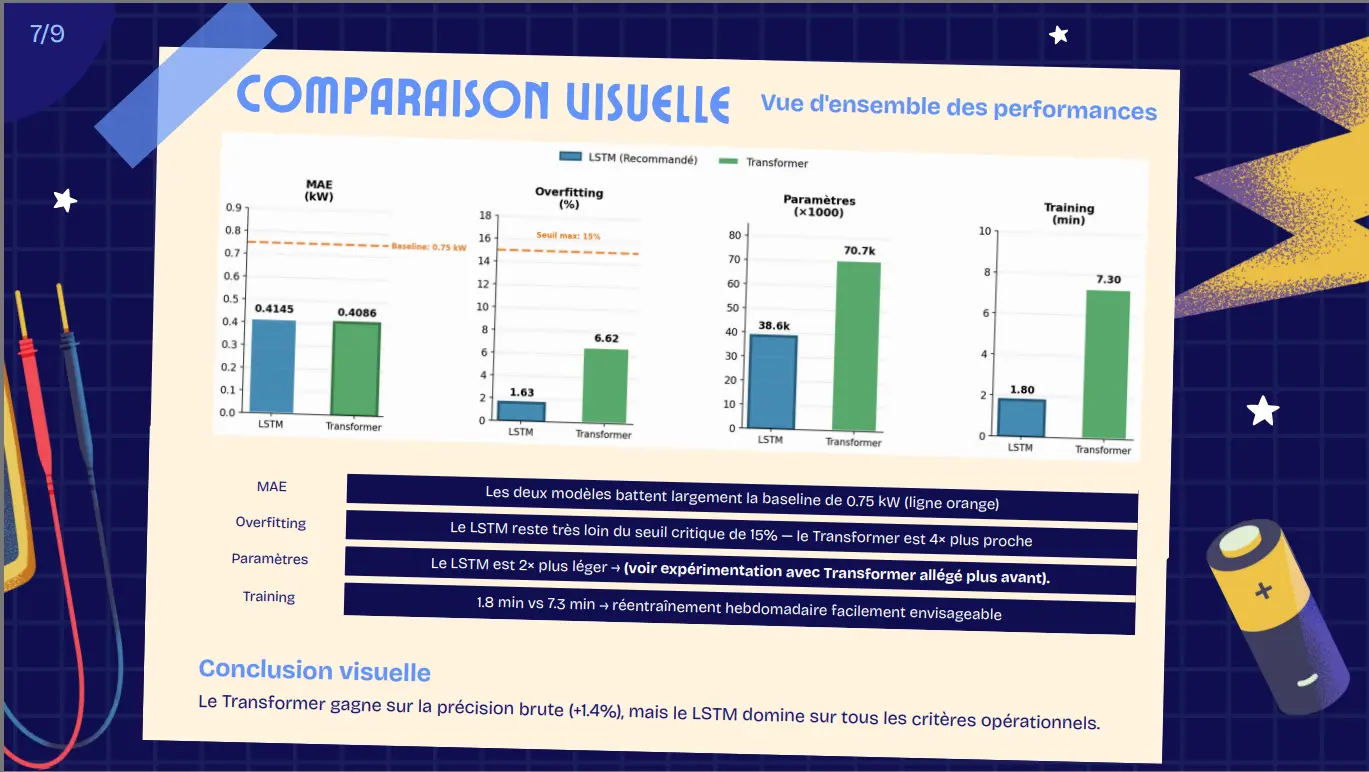

Model performance:

LSTM: MAE 0.4145 kW, overfitting gap 1.63%, training 1.8 min

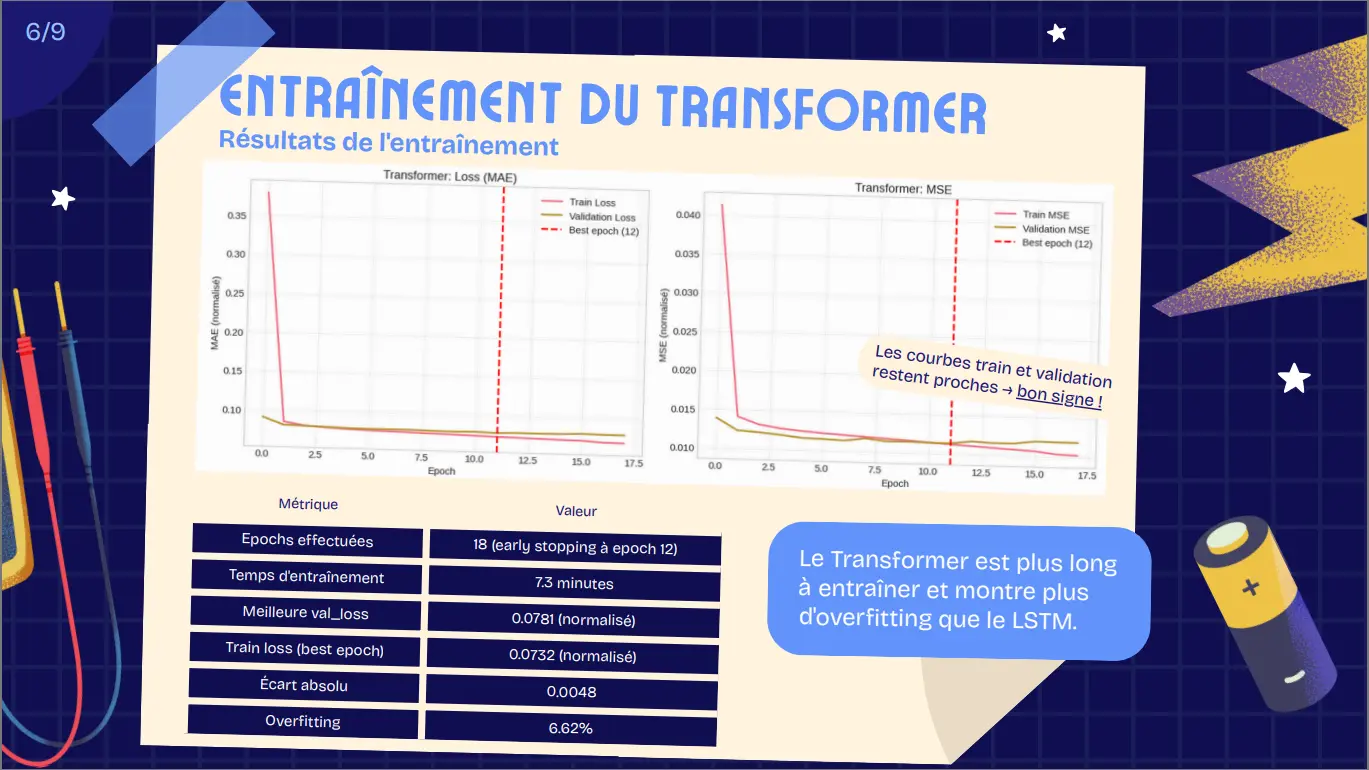

Transformer: MAE 0.4086 kW (+1.4%), overfitting gap 6.62%, training 7.3 min

Both achieved target MAE < 0.5 kW

Feature importance: lag features (lag_1h: 0.71 corr) and sub-meters dominate; weather features show weak correlation (~0.15-0.20)

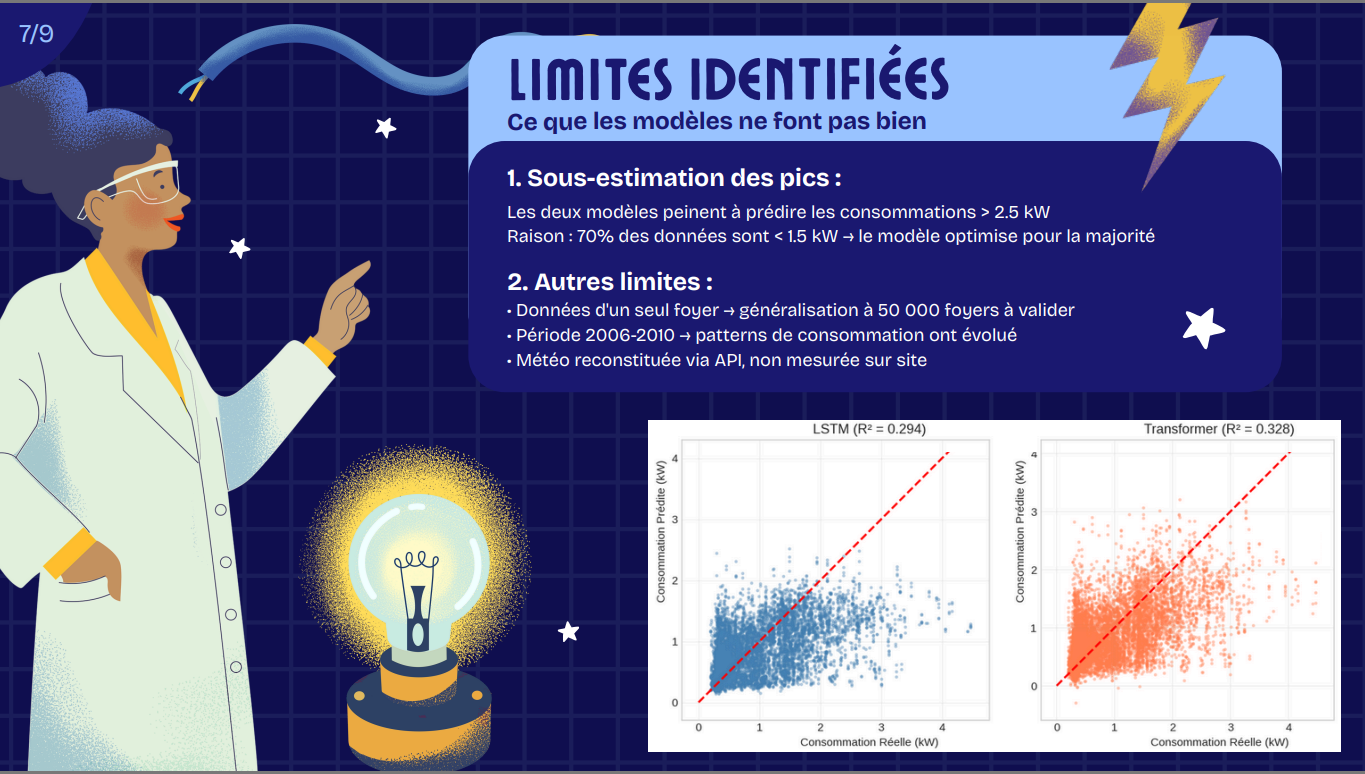

Limitations: both models underestimate peaks >2.5 kW; single household limits generalization.

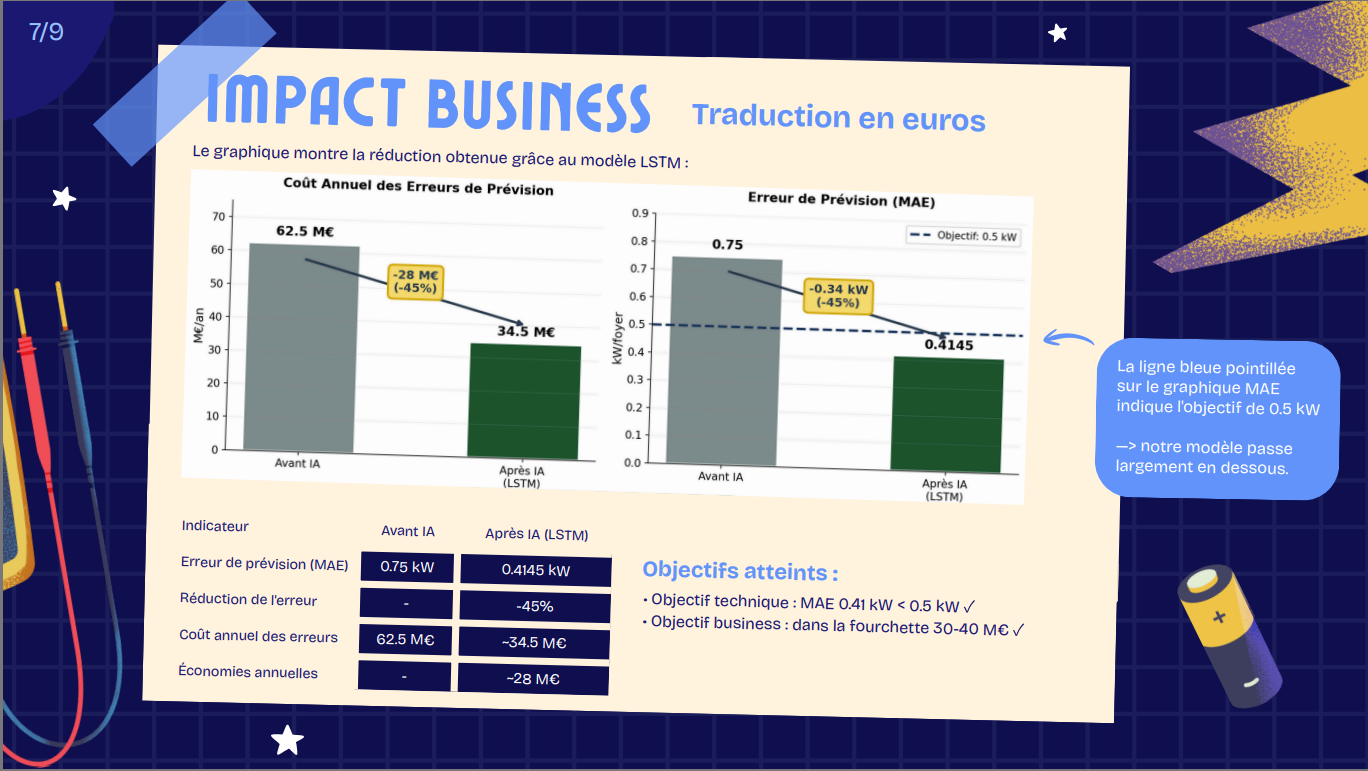

Business Impact

- LSTM recommended for production: 4x lower overfitting, simpler, faster retraining

- Error reduction: 45% (MAE 0.75 → 0.4145 kW)

- Estimated annual savings: ~€28M

- Deployment roadmap: pilot on 5,000 households for 3 months, weekly retraining, MAE monitoring dashboard, hyperparameter optimization

- Scientific contribution: demonstrated LSTM robustness over Transformer for residential energy forecasting, on simpler datasets.

Links

Notebook (Feature Engineering & Modeling)